Chunking Strategies for RAG

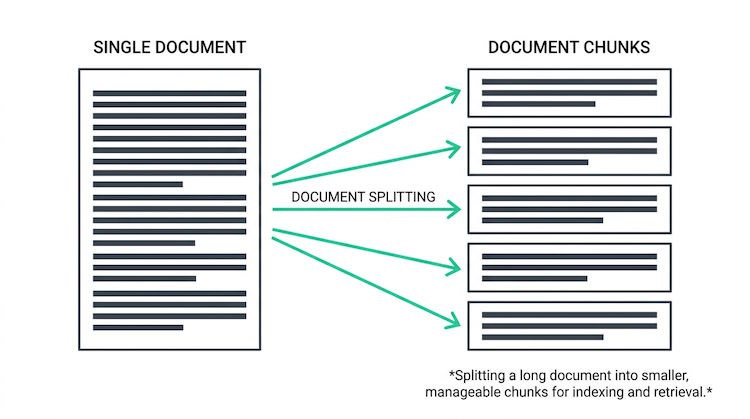

Chunking is how you split documents into pieces before embedding and indexing. Too large and retrieval may pull in irrelevant context; too small and you lose coherence. Good chunking balances size, overlap, and semantic boundaries so that the right chunks are retrieved and the LLM gets usable context.

Strategies

Fixed-size chunking (e.g. 512 tokens with 50-token overlap) is simple and works well. Sentence or paragraph boundaries help keep chunks meaningful. Some systems use semantic chunking: split where topic or meaning shifts. Overlap between chunks reduces the risk of cutting important context at boundaries.

def chunk_with_overlap(text: str, chunk_size: int = 512, overlap: int = 50):

tokens = tokenize(text)

chunks = []

for i in range(0, len(tokens), chunk_size - overlap):

chunk = tokens[i:i + chunk_size]

chunks.append(detokenize(chunk))

return chunksTesting matters

Try different chunk sizes and overlaps on your documents. Measure retrieval accuracy for real questions. Long policies or manuals may need larger chunks or hierarchical retrieval so the model can zoom in on the right section.

# Typical ranges (tokens)

# Short chunks: 128-256 (precise retrieval, may lose context)

# Medium: 512 (common default, balance)

# Long: 1024+ (more context, more noise)

# Overlap: 10-20% (reduces boundary cuts)