RAG ROI: Measuring Impact

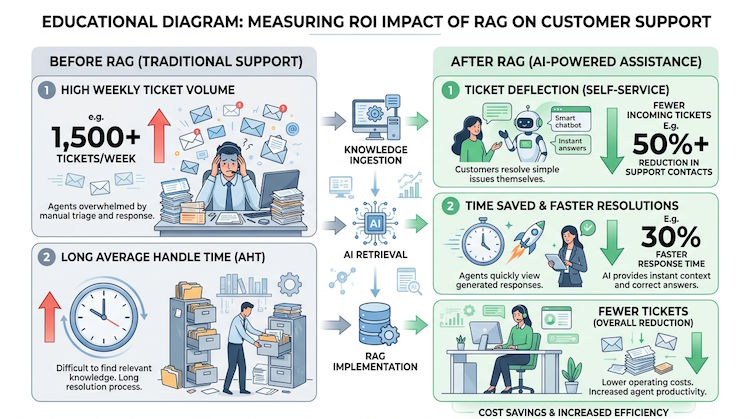

Measuring RAG ROI means tying the system to outcomes: time saved, deflection rate, consistency of answers, and fewer errors. Start with baseline metrics before RAG, then compare after deployment. Qualitative feedback from support and employees helps too.

Metrics that matter

Time to answer (for support or internal Q&A), number of questions deflected from humans, citation accuracy in evaluations, and user satisfaction. If the system refuses when it shouldn't or hallucinates, track those and tune retrieval and prompts.

Before you claim ROI, capture a baseline: same weeks of the year, same channels, and comparable ticket types. After launch, compare cohorts with and without the assistant where possible, or use A/B routing. One-time anecdotes are not enough for finance or compliance teams—show trend lines over at least a few weeks.

# Example: track deflection and time

before_rag = { "tickets_per_week": 200, "avg_handle_mins": 12 }

after_rag = { "tickets_per_week": 140, "avg_handle_mins": 8 }

deflection = (before_rag["tickets_per_week"] - after_rag["tickets_per_week"]) / before_rag["tickets_per_week"]

time_saved_per_ticket = before_rag["avg_handle_mins"] - after_rag["avg_handle_mins"]Long-term value

Beyond immediate efficiency, RAG makes knowledge accessible and consistent. That improves onboarding, compliance, and decision quality. ROI calculations can include reduced training time and fewer errors from outdated or scattered information.

# Example ROI framing

# Input: tickets/week, avg handle time, cost per agent hour

# Output: deflection rate, time saved, $ saved

# Intangibles: consistency, faster onboarding, fewer wrong answers