RAG vs Fine-Tuning

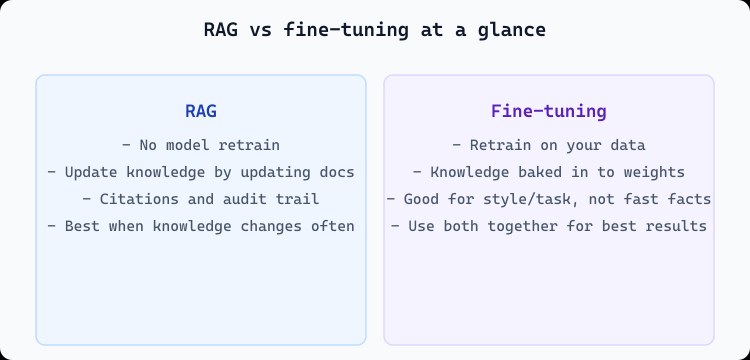

RAG and fine-tuning solve different problems. RAG retrieves relevant information at query time and feeds it to the model as context. Fine-tuning updates the model's weights on your data so it behaves differently or knows new facts. Both can be used together: fine-tune for style or task, and use RAG for up-to-date, citable knowledge.

RAG: no retraining

With RAG you keep the base model as-is. You update knowledge by updating the documents in your vector store. There's no training run, and you get citations. Best when knowledge changes often or you need an audit trail.

Fine-tuning: baked-in behavior

Fine-tuning changes the model's parameters. It's great for consistent tone, format, or task behavior. It's less ideal for fast-changing facts because every change requires a new training run. Use it for how the model responds, not as the only source of truth.

# RAG: knowledge lives in the index

# Update docs → re-embed → search returns new content

# No model retrain

# Fine-tuning: knowledge in weights

# Update data → retrain → redeploy model

# Good for style/task; combine with RAG for factsCombining both

In practice, many systems use both: fine-tune a model for your tone and format, then use RAG to inject up-to-date knowledge and citations at query time. The model handles how to phrase the answer; RAG supplies what to say. That way you get consistent style and verifiable, current content.